(Big) Data fusion

Copernicus programme, the largest single Earth Observation program to date is delivering an unprecedented wealth of data imagery. In fact, during the ESA Earth Observation Φ-week, the director of ESA Earth Observation programmes Josef Aschabacher, announced that Sentinel missions are delivering 150 TB of satellite data per day! Cloud computing platforms and Artificial Intelligence (AI) are overtaking traditional tools to tackle challenges coming from Big Data and extraction of meaningful information taking advantage of high revisit time provided by these missions.

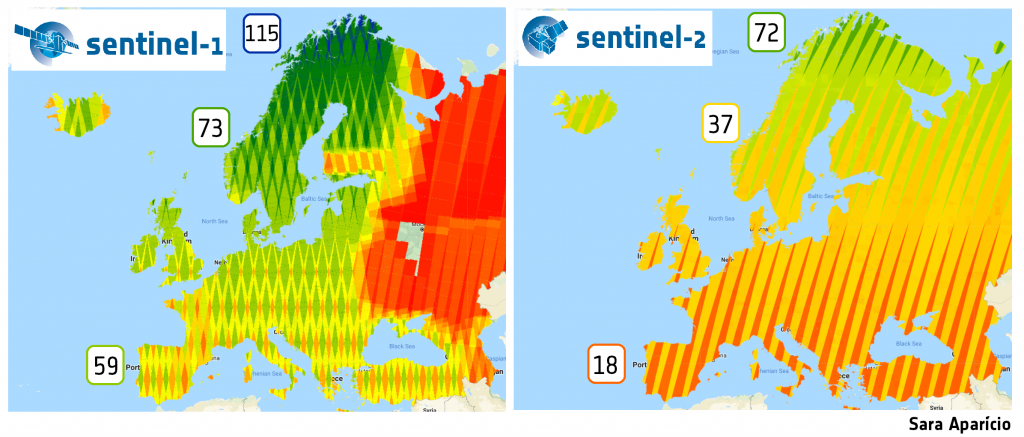

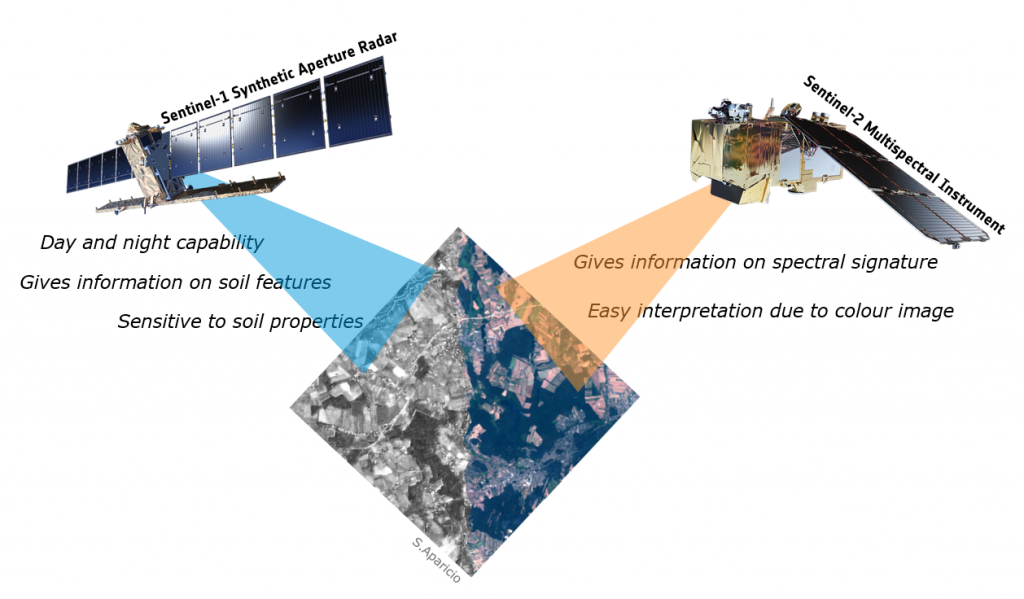

Research is underway to identify Machine Learning (ML) techniques for classification purposes fusing satellite data of different nature, namely synthetic aperture radar (SAR) and multispectral data from Sentinel-1 and Sentinel-2, respectively, from Copernicus program. Google Earth Engine provides many ML algorithms and satellite imagery data, being the method, which are very useful for extracting land cover from different sources of imagery. One of the main goals is to understand and quantify/qualify the improvement and accuracy of the best performing model (given by its overall accuracy) considering different bands and indices scenarios for multi-temporal land cover classification.

This will allow to create in a cost-effective way large-scale maps of land-cover at high resolutions, taking advantage of completely different type of sensors and higher revisit time from merging data from these constellations.

Post contributed by Sara Aparicio.